Engineering teams now use AI tools to write code, review pull requests, and fix bugs faster. Many vendors promise large productivity gains. Real-world data shows mixed results. Some teams see clear speed and cost benefits. Others struggle to measure real business value.

This article shares verified AI tool ROI statistics focused only on engineering teams. The data covers productivity, code quality, cost savings, adoption, and long-term impact. Each section groups related statistics so readers can judge where AI delivers value and where it creates risk.

Struggling to turn AI tools into real engineering ROI? Index.dev connects you with senior developers who know how to measure, deploy, and scale AI effectively.

AI Tool Adoption and Usage Metrics

AI tool adoption shows whether engineering teams actually use AI in daily work. High usage alone does not guarantee ROI. Teams must track how often engineers accept AI suggestions and how well the tools fit into real workflows. Balanced adoption helps teams gain speed without hurting code quality. Poor adoption or blind trust both reduce long term value.

Key statistics:

- High performing engineering teams achieve 60 to 70% weekly AI tool adoption when they measure usage and improve workflows regularly.

- Healthy AI coding setups show 25 to 40% suggestion acceptance rates, which reflect proper tool setup and active human review.

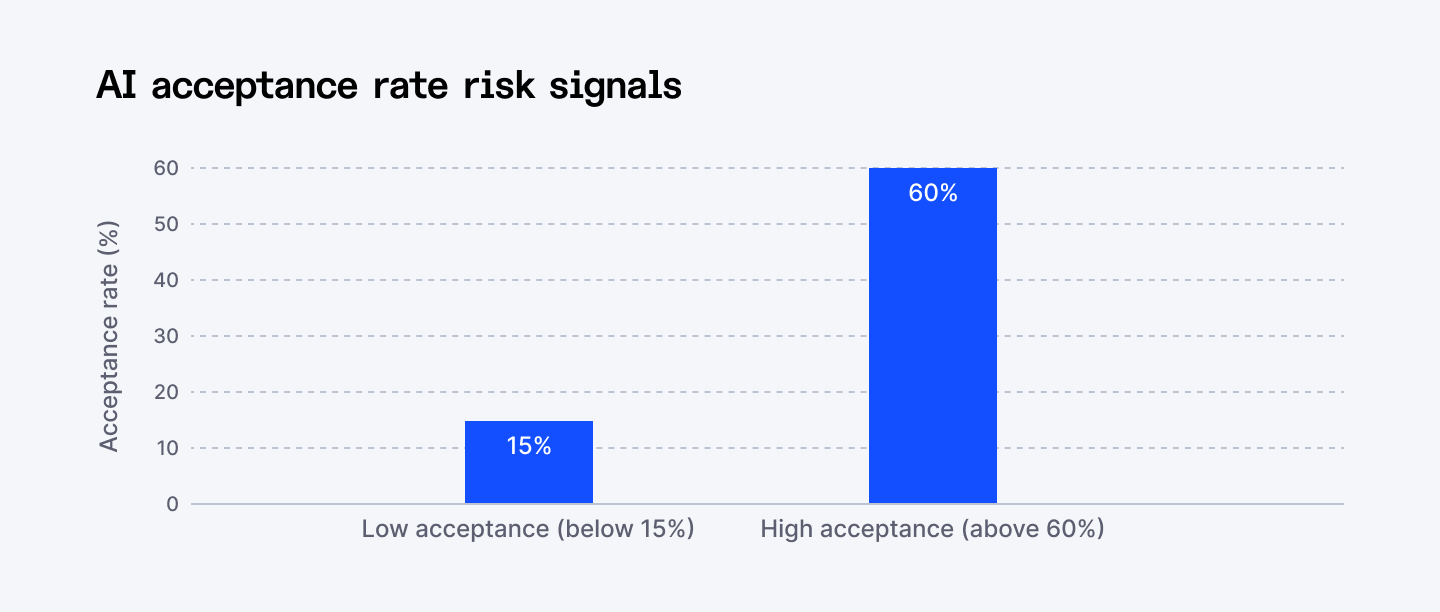

- Acceptance rates below 15% signal poor configuration, low trust, or unclear use cases.

- Acceptance rates above 60% indicate over-reliance on AI and reduced critical thinking.

- More than 75% of developers use AI coding assistants, yet many organizations still fail to see matching improvements in delivery speed or business outcomes.

Developer Productivity and Delivery Speed

This section focuses on how AI tools affect developer output and task completion speed. Productivity gains vary widely across teams because they depend on task type, experience level, and review practices. Measured data shows steady improvements, but not the extreme gains often claimed in marketing.

Key statistics:

- Real organizations report only 0.3 to 1x productivity improvement, which is far lower than the common 2x productivity claims made by AI tool vendors.

- Teams that measure results over 30 day periods report 20 to 40% faster task completion, especially for routine engineering work.

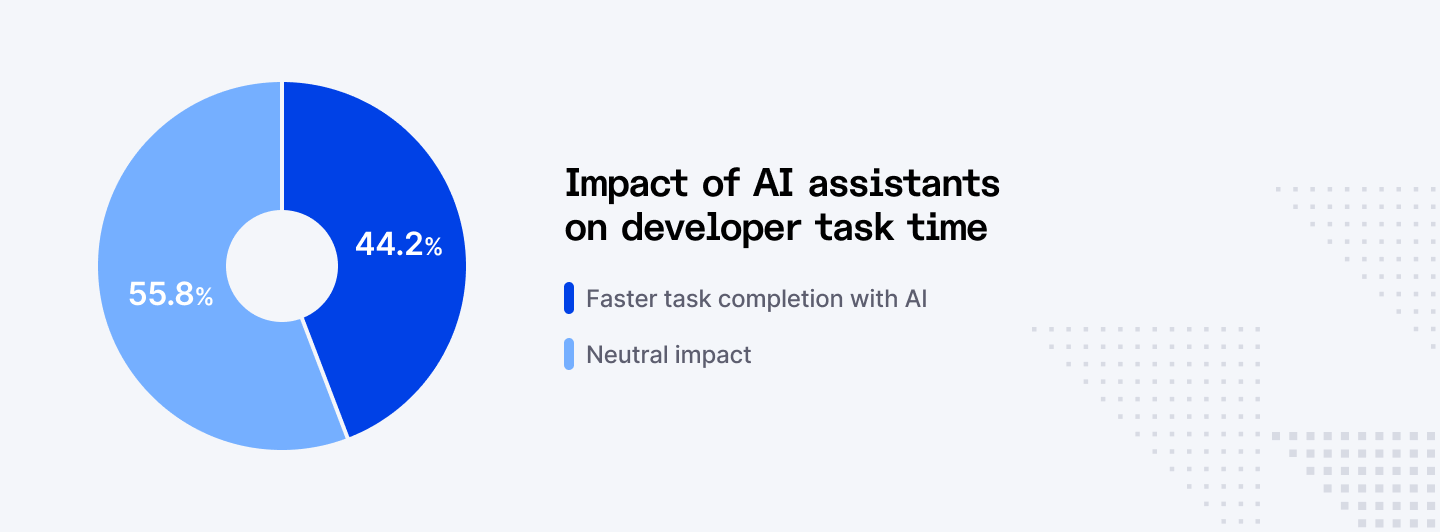

- In controlled studies, developers using AI assistants completed tasks 55.8% faster, but this improvement required time spent on prompt writing and output validation.

- Developers save an average of 2 to 3 hours per week, while power users save more than 5 hours per week through AI assisted coding.

- A controlled MIT backed study found that experienced developers sometimes took around 19% longer when using AI tools due to verification and context switching overhead.

Workflow Level Efficiency Gains

AI tools deliver the strongest ROI when teams use them in specific engineering workflows. These gains appear faster in focused tasks than in full feature delivery. Teams see the best results when AI supports repetitive and well defined work.

Key statistics:

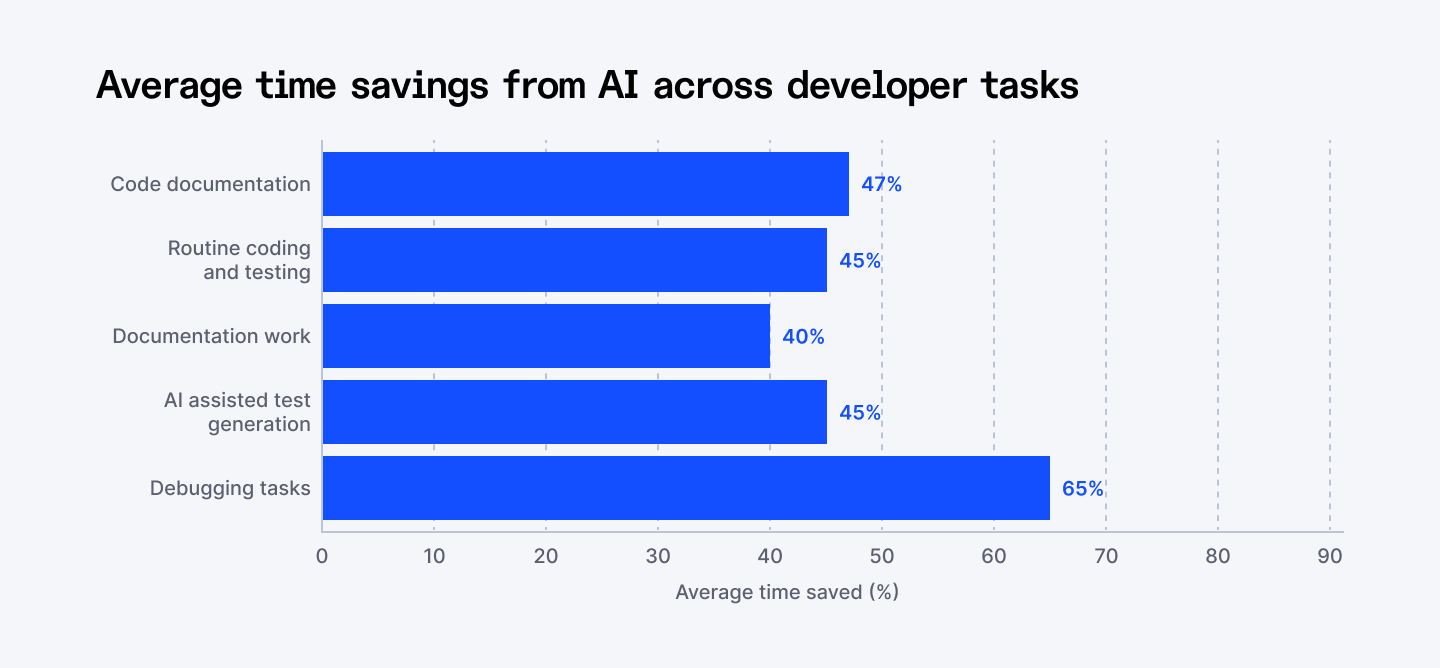

- Debugging tasks show the highest gains, with 60 to 70% reduction in time for stack trace analysis and error investigation.

- AI assisted test generation completes 40 to 50% faster, which helps teams improve coverage without adding QA effort.

- Documentation work finishes 35 to 45% faster when AI generates summaries and code explanations.

- Teams report 30 to 60% time savings on routine coding and testing tasks when AI tools integrate well into existing workflows.

- Code documentation alone takes 45 to 50% less time, freeing engineers to focus on design and problem solving.

Code Review and Pull Request Impact

AI tools increase how much code engineers produce, but they also change how teams review that code. Faster code creation does not always mean faster delivery. Human review, approval, and discussion now decide overall speed. Teams that adjust review rules and ownership see gains, while others face longer queues and delays.

Key statistics:

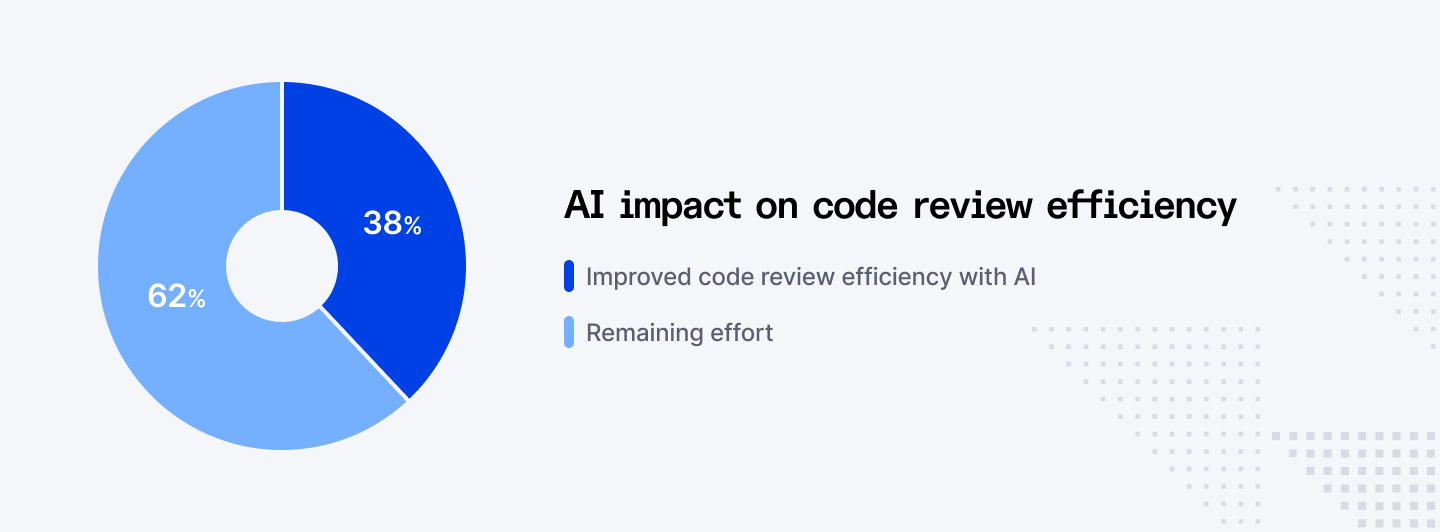

- Teams achieve a 38% increase in code review efficiency when AI handles routine checks and formatting tasks.

- Heavy AI users merge nearly five times more pull requests per week compared to engineers who do not use AI tools.

- Developers on teams with high AI adoption complete 21% more tasks and merge 98% more pull requests.

- Review time increases by 91% in high adoption teams because human approval becomes the main constraint.

- AI assisted development shifts reviews away from syntax checks, leading to a 30 to 50% drop in basic error catching and more focus on architecture and design discussions.

Read next: The most surprising stats showing how enterprises are really using LLMs today.

Code Quality and Risk Control

AI tools can improve speed, but they also affect code quality and system risk. Teams must track bugs, security issues, and pull request size to avoid long term damage. Strong guardrails help teams gain ROI without hurting stability.

Key statistics:

- Well managed engineering teams maintain 0.5 to 2 bugs per 1,000 lines of code even after adopting AI tools.

- Teams with heavy AI usage see a 9% increase in bugs per developer, which shows the risk of weak review practices.

- Academic studies found that 29.1% of AI generated Python code contains security weaknesses, which requires strict human review.

- High AI adoption links to a 154% increase in average pull request size, which makes reviews harder and slower.

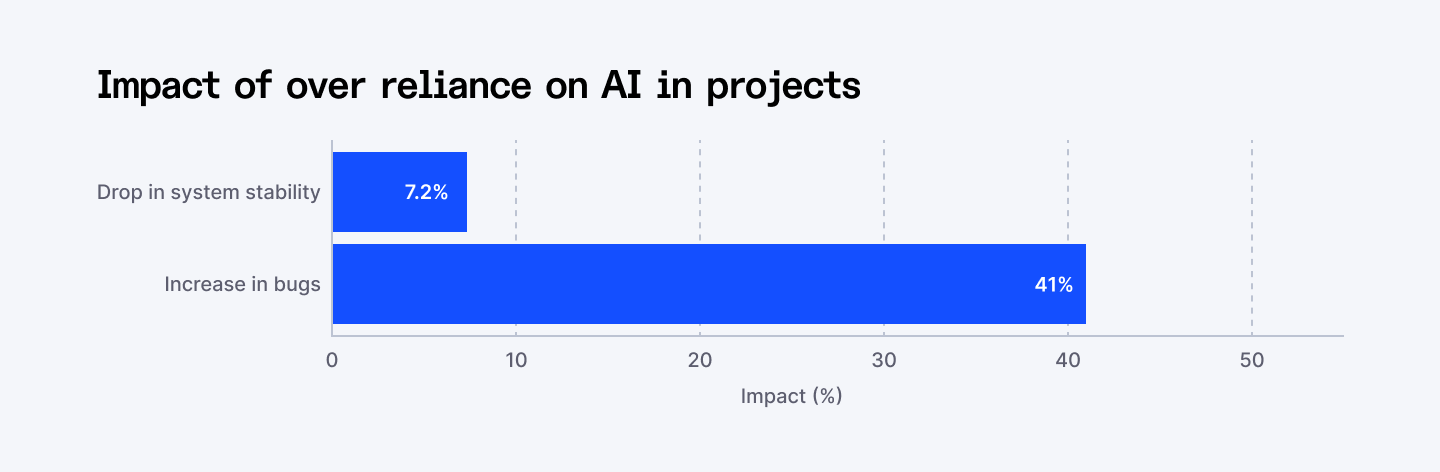

- Projects that rely too much on AI report 41% more bugs and a 7.2% drop in system stability.

Cost Savings and ROI Impact

AI tools show real ROI when teams translate time savings into financial value. Faster reviews, early issue detection, and better documentation reduce hidden engineering costs. These benefits grow as team size and system complexity increase. Teams that track cost impact, not just speed, see clearer business results.

Key statistics:

- A 38% improvement in code review efficiency reduces manual effort and lowers engineering labor costs.

- AI generated documentation and consistent coding patterns reduce compliance audit preparation time by 40 to 60%, which lowers legal and consulting expenses.

- Reduced review workload allows a senior engineer earning 200,000 dollars per year to recover nearly 76,000 dollars in opportunity cost.

- Automated security checks during development help teams avoid breaches that cost 4.45 million dollars on average.

- Early issue detection reduces developer context switching, a problem that can consume around 25% of total productivity.

Developer Experience and Focus

AI tools also affect how engineers feel and work during the day. Better focus and fewer interruptions support long term productivity. These gains do not always show up in short term delivery metrics, but they matter for team health and retention.

Key statistics

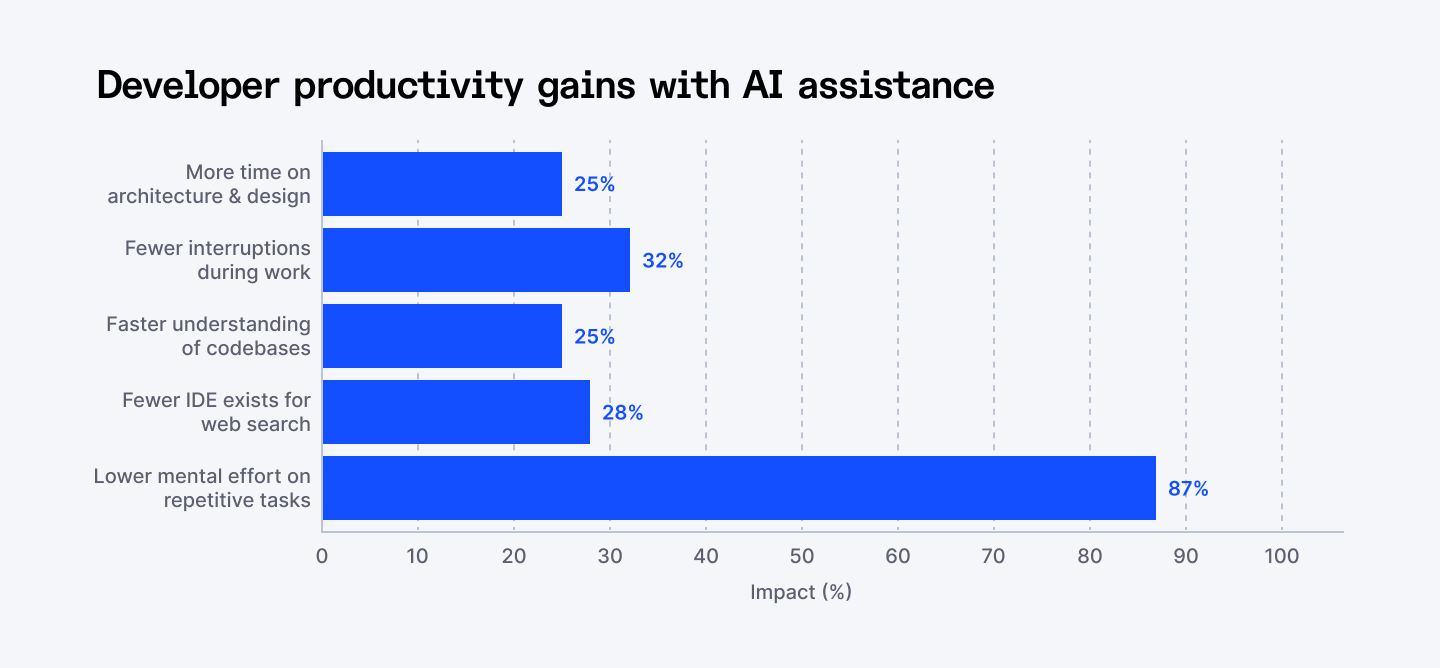

- 73% of developers report that AI tools help them stay in a flow state while coding.

- Between 60 and 75% of users feel more fulfilled and less frustrated when AI supports daily development work.

- Teams experience 25 to 40% fewer interruptions when AI handles routine coding, searches, and formatting.

- Developers report 87% lower mental effort on repetitive tasks with AI assistance.

- Developers understand unfamiliar codebases 25% faster with AI generated explanations and summaries.

- Engineers spend 20 to 30% more time on architecture and design decisions because AI handles implementation details.

- Developers leave their IDE 28% less often to search the web when using AI tools.

- New team members onboard 2 to 3 times faster when AI explains code structure and logic.

Measurement Challenges and ROI Failure Risks

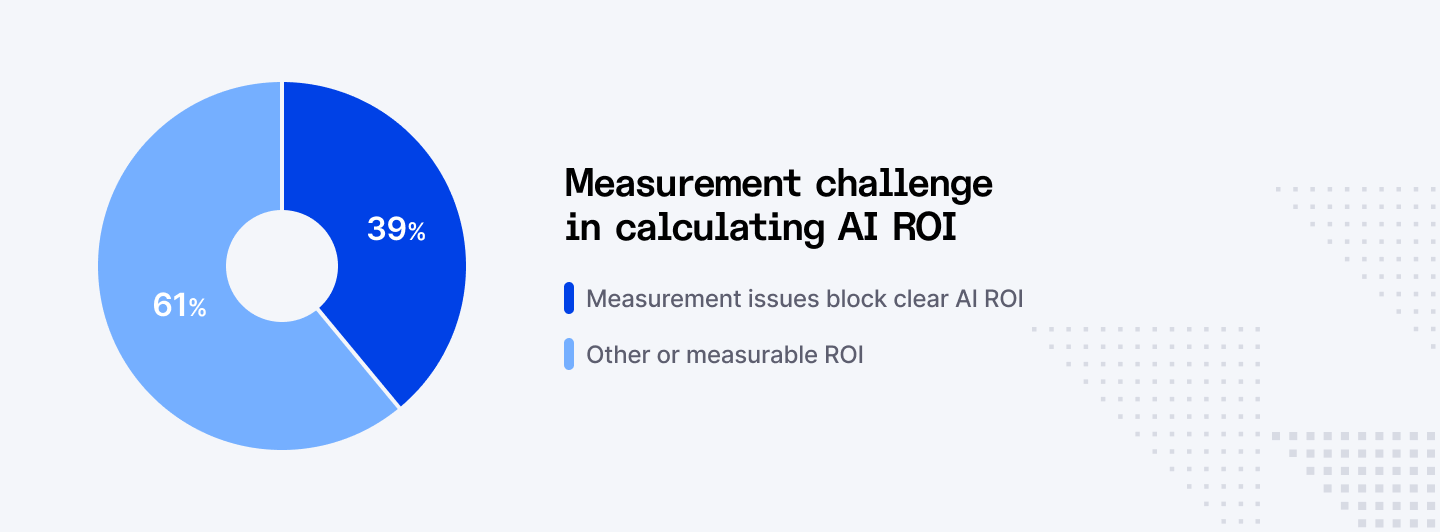

Many engineering teams adopt AI tools but fail to prove real ROI. The main reason is poor measurement. Teams often track activity metrics instead of business outcomes. Short testing periods also hide quality issues and long term costs. Without clear benchmarks, AI value stays unclear.

Key statistics

- Only 5% of generative AI pilots deliver sustained value at scale, even though early results often look positive.

- 39% of executives report that measurement problems prevent them from calculating AI ROI clearly.

- Teams that rely on short pilots often abandon AI adoption, with 30% of projects stopping after proof of concept.

- Effective A B tests require 8 to 12 weeks to capture coding, review, and production impact. Testing periods shorter than full development cycles fail to reveal quality and review issues that appear later.

Short Term vs Long Term ROI Timelines

AI tool ROI does not appear at the same pace for every use case. Simple automation delivers faster results, while advanced AI needs deeper system changes. Teams that expect instant gains from complex AI often get disappointed.

Key statistics:

- Basic automation use cases show ROI within 1 to 2 years because they need low integration effort.

- 45% of teams expect fast ROI from simple AI features like code suggestions and test generation.

- Advanced and agent based AI often takes 3 years or more to deliver ROI due to workflow redesign and governance needs.

- 60% of teams expect longer ROI timelines for higher level AI systems.

- Product teams that follow core AI best practices report a median ROI of 55%.

- Organizations that invest 5% or more of their budget in AI see better returns only when they measure results consistently.

Up next: See the key numbers revealing how fast enterprises are adopting AI agents.

Final Words

AI tools deliver real value for engineering teams, but only under the right conditions. The data shows that AI improves speed, reduces manual effort, and lowers costs when teams focus on clear use cases and strong workflows. At the same time, poor measurement, weak review practices, and unrealistic expectations reduce ROI.

Engineering leaders should track adoption, productivity, quality, and cost together. They should test AI tools over full development cycles and treat AI as part of the engineering system, not a shortcut. Teams that balance speed with quality and governance see the most reliable and lasting ROI.

➡︎ Building engineering teams that maximize AI tool ROI? Index.dev connects you with developers who know how to implement AI coding assistants, measure productivity gains, and maintain code quality at scale. Hire engineers who understand the difference between adoption and real value.

➡︎ Want to go deeper into where AI is really headed? Explore more Index.dev insights on AI literacy and what it means in 2026, how AI is reshaping application and cloud development, and which industries are closest to a real AI tipping point. You can also dig into practical perspectives on why forward-deployed engineers matter, plus hands-on model comparisons that break down DeepSeek versus ChatGPT, how it stacks up against Claude, and which open-source Chinese LLMs are gaining serious traction.

Data Sources

- https://newsletter.eng-leadership.com/p/how-to-measure-ai-impact-in-engineering

- https://getdx.com/blog/measure-ai-impact/

- https://ctoexecutiveinsights.com/blog/quantifying-ai-tool-roi-for-engineering-teams

- https://www.businessvalueengineering.com/articles/unveiling-the-metrics-how-to-quantify-the-business-value-of-ai

- https://www.forbes.com/sites/forbes-research/2025/10/08/ai-roi-measurement-challenges-forbes-survey-2025/

- https://www.ibm.com/think/insights/ai-roi

- https://metr.org/blog/2025-07-10-early-2025-ai-experienced-os-dev-study

- https://survey.stackoverflow.co/2024/ai

- http://faros.ai/ai-productivity-paradox

- https://www.faros.ai/blog/ai-software-engineering

- https://survey.stackoverflow.co/2024/ai/

- https://sourcegraph.com/case-studies/qualtrics-speeds-up-unit-tests-and-code-understanding-with-cody

- https://www.worklytics.co/resources/calculating-roi-generative-ai-tools-worklytics-framework

- https://www.worklytics.co/blog/adoption-to-efficiency-measuring-copilot-success

- https://www.atomicwork.com/reports/state-of-ai-in-it-2025

- https://github.blog/news-insights/research/research-quantifying-github-copilots-impact-on-developer-productivity-and-happiness/

- https://www.secondtalent.com/resources/top-ai-developer-tools-that-cut-engineering-time/

- https://github.blog/news-insights/research/research-quantifying-github-copilots-impact-on-developer-productivity-and-happiness/

- https://www.gitclear.com/ai_assistant_code_quality_2025_research

- https://www.allaboutai.com/resources/ai-statistics/ai-in-software-development/

- https://www.deloitte.com/us/en/insights/topics/digital-transformation/ai-tech-investment-roi.html

- https://mlq.ai/media/quarterly_decks/v0.1_State_of_AI_in_Business_2025_Report.pdf

- https://www.ademero.com/ai-resources/case-studies/manufacturing-predictive-maintenance

- https://www.worklytics.co/blog/impact-of-ai-in-businesses

- https://www.mckinsey.com/capabilities/tech-and-ai/our-insights/unleashing-developer-productivity-with-generative-ai