Enterprise AI systems are rapidly moving into production, but security controls are not keeping pace.

This Enterprise AI Security Risk Statistics roundup presents 40+ verified statistics covering AI breaches, access failures, shadow AI usage, attack methods, financial impact, and regulatory risk across real enterprise environments.

Each data point highlights how rising AI adoption is expanding security exposure for organizations worldwide. For full transparency, all data sources are listed at the end of this article so readers can verify every figure.

Hire senior engineers who build secure, production-ready AI systems from day one.

Key AI Security Incident Statistics

- Enterprise AI and machine learning usage has increased by 3,000% year over year, dramatically expanding the attack surface for AI-powered systems.

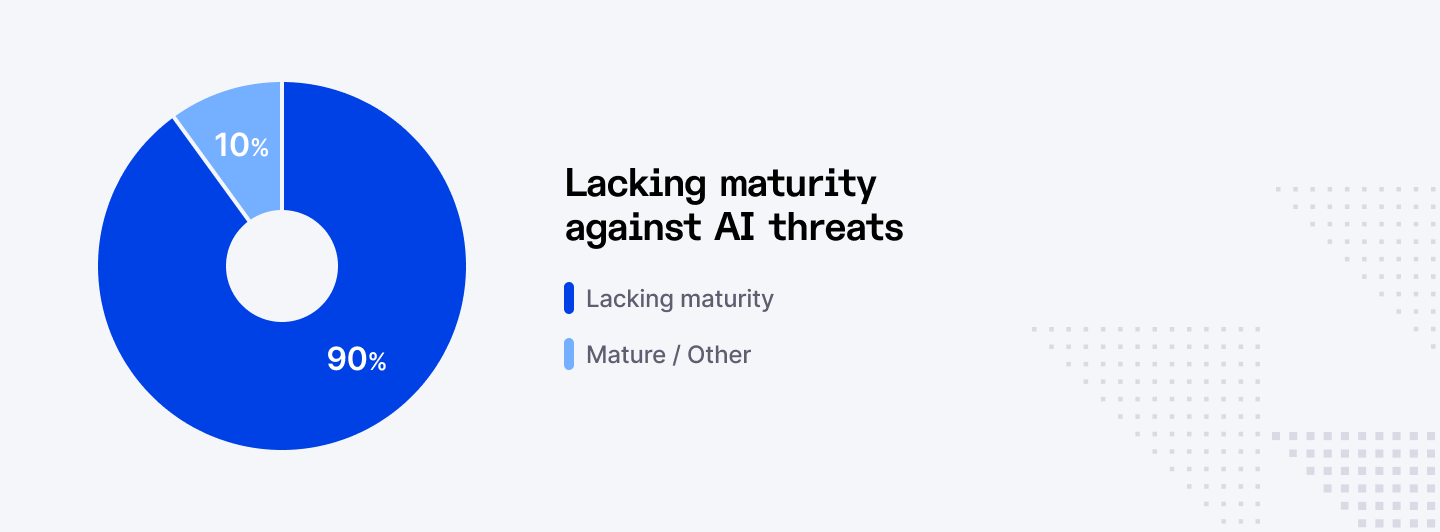

- 90% of organizations implementing or planning large language model use cases report that they lack the maturity required to defend against AI-enabled threats.

- Only 5% of organizations deploying AI systems say they are confident in their ability to secure AI models and data pipelines.

- 89% of IT leaders state that AI models are now critical to their business operations, increasing the potential impact of security failures.

- 86% of organizations experienced at least one AI-related security incident within the past 12 months.

- 13% of organizations report confirmed breaches of AI models or AI-powered applications in production environments.

- 97% of organizations that suffered AI-related breaches lacked proper access controls for AI systems at the time of the incident.

- 59.9% of AI and machine learning transactions are blocked due to security and policy violations, showing how often risky activity is detected.

- 20% of organizations have already suffered security breaches caused by unsanctioned or shadow AI usage.

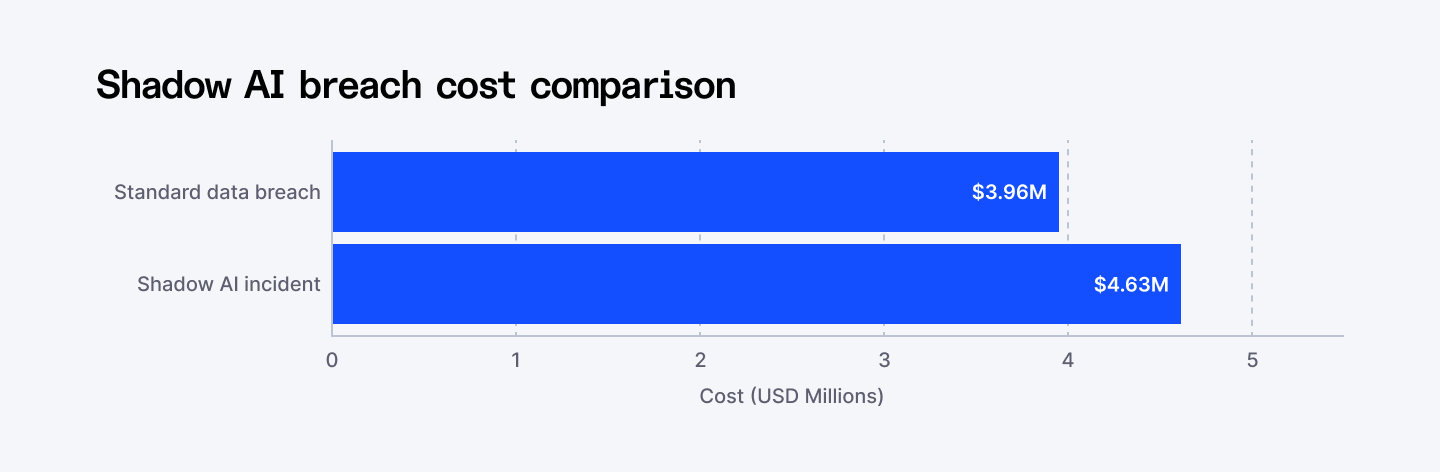

- Shadow AI incidents add an average of USD 670,000 in additional breach costs compared with standard data breach events.

AI Adoption and the Security Maturity Gap

Enterprises are deploying AI rapidly without matching investments in governance and security. This gap leaves models, data pipelines, and automated workflows exposed. As AI becomes business critical, weak security maturity increases the risk of major operational and data failures.

- Enterprise AI and machine learning usage has increased by 3,000% year over year, showing how rapidly organizations are deploying AI into production environments.

- 90% of organizations implementing or planning large language model use cases say they lack the maturity required to defend against AI-enabled threats.

- Only 5% of organizations deploying AI systems report confidence in their ability to secure AI models and data pipelines.

- 89% of IT leaders say AI models are now critical to their business operations, increasing the potential impact of any security failure.

- 77% of organizations lack foundational data and AI security practices, leaving core AI infrastructure exposed to misuse and attacks.

AI Breaches and Access Control Failures

Poor access control is the leading cause of AI breaches. Many organizations expose AI systems through APIs and integrations without proper safeguards. Limited monitoring means some companies do not even know if their AI systems have already been compromised.

- 13% of organizations have reported confirmed breaches of AI models or AI-powered applications running in production environments.

- 8% of organizations say they do not know whether their AI systems or models have already been compromised, highlighting major blind spots in monitoring and detection.

- 97% of organizations that suffered AI-related breaches lacked proper access controls on their AI systems at the time of the incident.

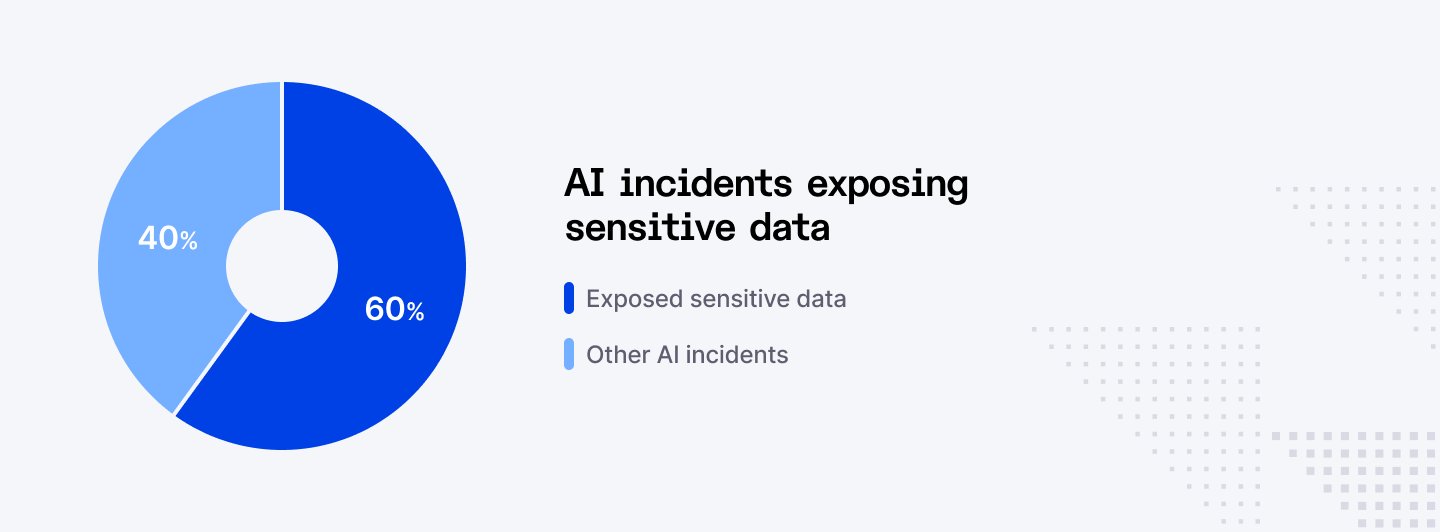

- 60% of AI-related security incidents resulted in sensitive data being exposed, leaked, or exfiltrated from enterprise environments.

- 31% of AI incidents caused direct operational disruption, including system outages, workflow failures, or service degradation.

Up next: See how enterprises are really using AI agents—and what ROI they’re getting.

Shadow AI and Uncontrolled AI Usage

Shadow AI is a growing threat driven by employees using unapproved tools. These tools bypass security controls and often handle sensitive data. As a result, shadow AI incidents are more costly and harder to detect than traditional enterprise breaches.

- 20% of organizations have already experienced security breaches caused by shadow AI tools operating outside approved IT environments.

- Shadow AI incidents cost an average of USD 4.63 million per breach, compared with USD 3.96 million for standard enterprise data breaches.

- 65% of customers' personally identifiable information is compromised in shadow AI-related breaches, compared with lower exposure in traditional incidents.

- 40% of shadow AI incidents result in the exposure or theft of intellectual property, including source code, product designs, and proprietary models.

- 62% of shadow AI activity spans multiple cloud and on-premise environments, making detection and containment significantly more difficult.

AI-Driven Attack Methods

Attackers now use AI to automate phishing, impersonation, and intrusion techniques. These attacks scale faster and evade traditional defenses more easily. AI powered attacks are changing how cybercrime works and increasing breach success rates.

- Phishing attacks have increased by 1,265% since the release of ChatGPT, driven by the automation of email and social engineering campaigns.

- 82.6% of phishing emails are now generated or enhanced using artificial intelligence to improve realism and evade detection.

- 16% of enterprise data breaches now involve attackers using AI to automate or optimize their intrusion techniques.

- 37% of AI-driven attacks use phishing as the primary initial access method, making it the most common AI-enabled attack vector.

- 35% of AI-powered attacks rely on deepfake impersonation to trick employees into transferring data, credentials, or money.

Financial Impact of AI Security Incidents

AI breaches carry high financial damage due to data loss, downtime, and penalties. Incidents involving AI often cost more than standard breaches because they affect core systems, customer data, and proprietary models.

- The global average cost of a data breach reached USD 4.44 million in 2025, marking the first decline in five years but remaining historically high.

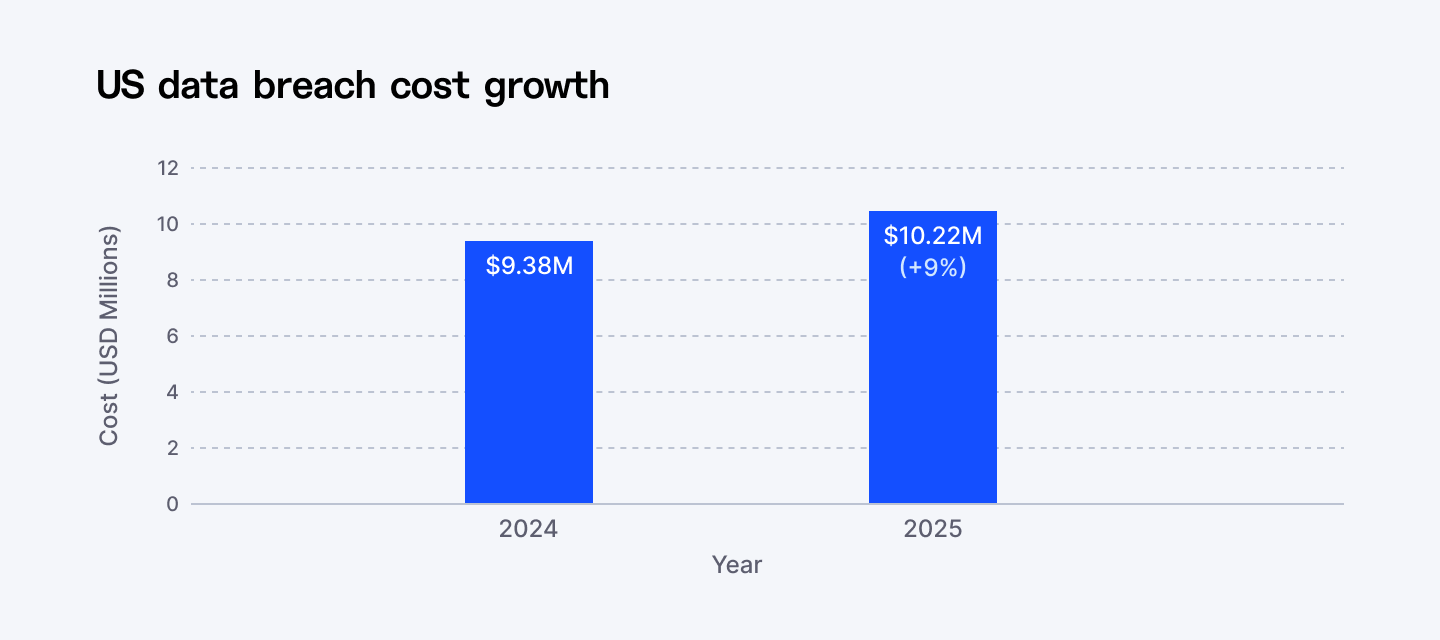

- The average cost of a data breach in the United States increased to USD 10.22 million, a 9% rise from the previous year.

- Healthcare organizations face the highest average breach costs at USD 7.42 million per incident, making it the most expensive industry for 14 consecutive years.

- Breaches involving shadow AI have an average cost of USD 4.63 million, making them more expensive than standard enterprise breaches.

- Incidents driven by AI-powered attacks average USD 4.49 million per breach, reflecting the high cost of sophisticated automated intrusions.

Data Exposure and Regulatory Consequences

AI breaches frequently expose sensitive personal and business data. These incidents trigger compliance actions, fines, and legal risk. Regulators now treat AI systems as high risk data environments with strict accountability.

- 60% of AI-related security incidents lead to the exposure of sensitive data, including customer and employee information.

- 53% of enterprise breaches involve customer personally identifiable information, rising to 65% in shadow AI incidents.

- 32% of organizations that suffered AI-related breaches were required to pay regulatory fines or penalties.

- 48% of fined organizations paid more than USD 100,000 in regulatory penalties following an AI-related breach.

- 25% of fined organizations paid more than USD 250,000 in regulatory penalties for AI and data protection violations.

Detection Speed and Security Automation

Fast detection reduces breach damage and cost. Organizations using AI driven security tools detect incidents earlier and limit losses. Slow detection remains one of the biggest reasons AI breaches become expensive.

- Organizations using extensive AI and security automation save an average of USD 1.9 million per breach compared with those without automation.

- Proper AI security controls reduce breach detection time by an average of 80 days compared with organizations lacking automated monitoring.

- The global mean time to detect a data breach reached 241 days, the fastest level recorded in nine years.

- Breaches that take more than 200 days to detect cost an average of USD 5.01 million, making delayed detection one of the biggest cost drivers.

- Multi-environment breaches spanning cloud and on-premise systems cost an average of USD 5.05 million, reflecting the complexity of detecting AI incidents across distributed environments.

AI Security Market Growth

Rising breach activity is pushing enterprises to invest in AI security tools. Spending is growing fast as companies look to protect models, data pipelines, and autonomous workflows. AI security is becoming a core cybersecurity priority.

- The global AI cybersecurity market is projected to reach USD 30.92 billion in 2025 as organizations expand investment in AI protection technologies.

- The AI cybersecurity market is expected to grow to USD 86.34 billion by 2030, reflecting rapid enterprise demand for AI security controls.

- This represents a compound annual growth rate of 22.8% between 2025 and 2030.

- The AI cybersecurity market is projected to grow by 186% over the next five years as AI adoption expands across industries.

- Nearly half of organizations now classify AI security as a top-tier budget priority due to rising breach costs and compliance risks.

AI Incident Causes and Attack Vectors

AI breaches follow clear technical patterns such as supply chain attacks, prompt injection, and data poisoning. These attack paths exploit how models are trained, accessed, and integrated. Defending AI now requires new security approaches beyond traditional IT controls.

- 30% of all AI security incidents are caused by supply chain compromise, including infected apps, APIs, and third-party plugins connected to enterprise AI systems.

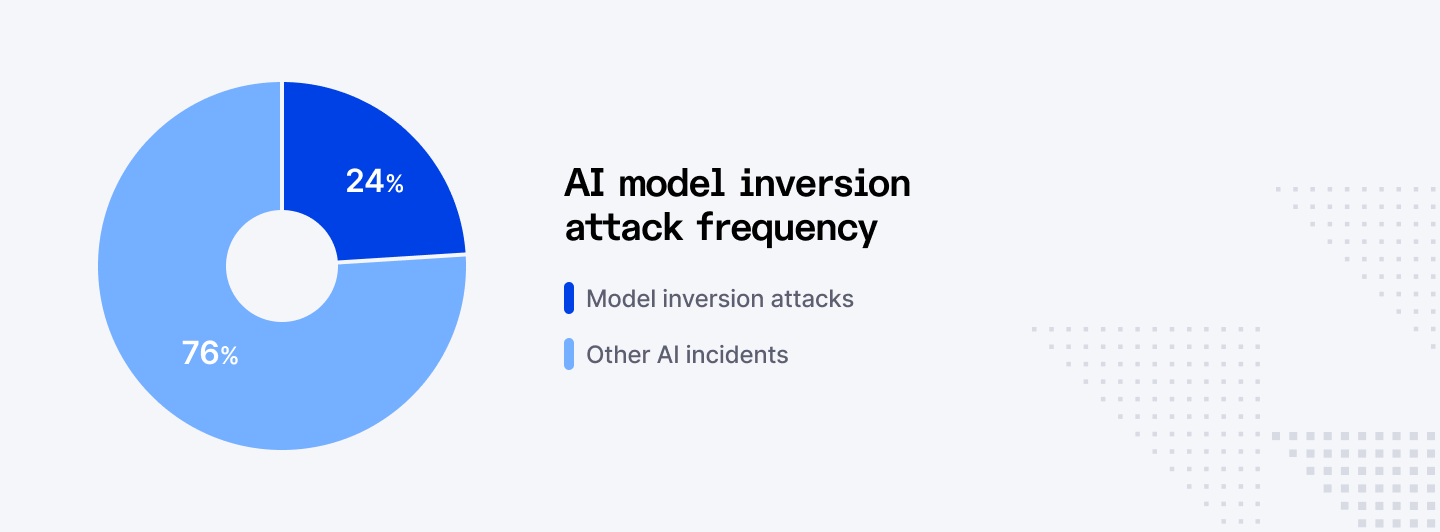

- 24% of AI incidents involve model inversion attacks, where attackers extract sensitive training data or private information directly from AI models.

- 21% of AI breaches are driven by model evasion techniques that deliberately trick AI systems into misclassifying malicious activity as safe.

- 17% of AI security incidents involve prompt injection, allowing attackers to manipulate model outputs or force unintended actions.

- 15% of AI incidents are caused by data poisoning attacks, where malicious data is injected during training to corrupt model behavior.

Read next: Explore the key stats behind how AI agents are scaling inside enterprises.

Final Words

AI security risks are no longer theoretical. The data shows frequent incidents, high costs, and widespread security gaps across enterprises. While AI adoption continues to grow, most organizations remain unprepared to secure autonomous systems at scale.

Companies that invest early in access control, monitoring, and governance will reduce risk and gain long term advantage as AI becomes central to business operations.

➡︎ Securing AI systems? Index.dev connects you with security engineers and AI developers experienced in model protection, access controls, API security, and compliance frameworks. With 86% of companies facing AI breaches, hire the talent who can defend your systems.

➡︎ Want to explore more insights on AI talent, strategy, and hiring? Dive into our related guides on global AI talent pools, building an AI-first tech stack, hiring developers faster with AI, emerging AI roles, and how top companies solved remote AI hiring challenges.

FAQs

How widespread are AI-related security incidents in enterprises?

86% of organizations experienced at least one AI-related security incident in the past 12 months, showing that AI systems are already a major attack surface in production environments.

How many organizations have actually suffered AI breaches?

13% of organizations report confirmed breaches of AI models or AI-powered applications, while 8% say they do not even know whether their AI systems have been compromised.

What percentage of AI breaches are caused by weak access controls?

97% of organizations that suffered AI-related breaches lacked proper access controls on their AI systems at the time of the incident.

Why is shadow AI more dangerous than standard enterprise AI deployments?

Shadow AI tools operate outside approved IT and security controls, which allows sensitive data to be uploaded to external models and makes breaches harder to detect and contain.

How is AI changing the cyberattack landscape?

AI enables attackers to automate phishing, impersonation, and model manipulation at scale, making attacks faster, cheaper, and more difficult for traditional defenses to stop.

Data Sources

- https://www.mintmcp.com/blog/ai-security-statistics

- https://newsroom.ibm.com/2025-07-30-ibm-report-13-of-organizations-reported-breaches-of-ai-models-or-applications-97-of-which-reported-lacking-proper-ai-access-controls

- https://www.kiteworks.com/cybersecurity-risk-management/ibm-2025-data-breach-report-ai-risks/

- https://deepstrike.io/blog/ai-cyber-attack-statistics-2025

- https://www.brightdefense.com/resources/data-breach-statistics/

- https://www.bakerdonelson.com/webfiles/Publications/20250822_Cost-of-a-Data-Breach-Report-2025.pdf

- https://www.bluefin.com/bluefin-news/ibms-2025-data-breach-report-key-findings-and-the-years-biggest-attacks

- https://www.weforum.org/publications/artificial-intelligence-and-cybersecurity-balancing-risks-and-rewards

- https://www.ibm.com/reports/data-breach

- https://www.opentext.com/resources/enterprise-artificial-intelligence-building-trusted-ai-in-the-sovereign-cloud

- https://iaeme.com/MasterAdmin/Journal_uploads/IJRCAIT/VOLUME_7_ISSUE_2/IJRCAIT_07_02_138.pdf

- https://www.microsoft.com/en-us/security/business/ai-security

- https://www.ibm.com/security/artificial-intelligence

- https://www.cci.gov.in/sites/default/files/whats_newdocument/AI_Incident_Reporting_V1.pdf